| Title: | The Obsolescence of Humans - You Can't Stop The Future |

| Date: | 2023/02/21 |

| Self Righteous: | 3 |

| Opinionated: | 3 |

| Simply true: | 9 |

| Technology: | 10 |

I love looking at the future, particularly in the realm of technology.

One of the things I find fascinating about technology is how difficult it is for us, as humans, to look at the advances of technology and properly understand how much our lives are going to change.

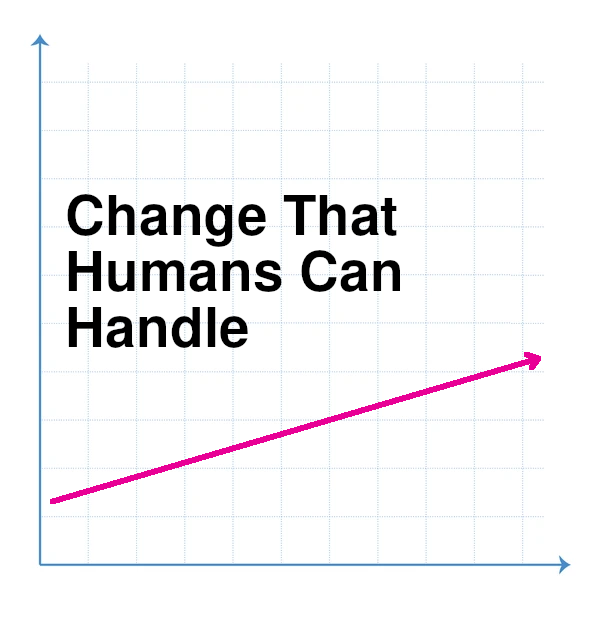

This failure on our part makes sense - because generally humans can only deal well with estimating and comprehending change at a linear rate. That's the kind of math that our brains can picture easily. But technology is usually changing at an exponential rate. That's why technology is so often transformative, we can't even comprehend the rate of change as it's happening to us.

The inability of humans to understand exponential change is nicely summed up in the fable of the king's reward to the inventor of chess. When the king asked him what he wanted as a reward for his beloved game, he said one grain of wheat on the first square on a chess board, two grains on the next square, and then doubling each square after that. The king happily agreed, thinking some grains of wheat was a cheap price for such a wonderful game, and did not realize that there was not enough wheat in the world to fulfill the request.

Another common failure we have in comprehending change is thinking that we have a choice in the matter. We don't, really. When something comes along that is so massively, ridiculously better, we end up embracing it as a society. We can see this internal conflict in current society with the topic of the rise of self driving cars. The most common comment I hear against self-driving cars is that people 'love' cars and aren't going to give them up. Sure. People loved horses, too, but I don't see lots of horse and buggies still on the road.

Part of the issue is that people have trouble seeing the full growth. Looking at self-driving cars as a linear growth is simple. You'll be able to get a car that can drive for you and you don't have to hold the steering wheel, so that would be nice, I suppose. But that's not the disruption. The disruption is when we realize that, if we disallow human-driving cars on the road, then a number of factors happen. Most importantly we can get rid of traffic controls entirely, because cars can work together to avoid accidents, without even stopping - interlacing as they go through intersections at full speed 'magically'. Self-driving cars can tailgate either in safe convoys for massive traffic density, and be massively safer than a human driver on a wide open road. Soon cars will become commoditized, it will be silly to actually pay massive amounts of money to own a car which just spends 90% of its time sitting in a parking spot.

I'd wager we'll see this in San Francisco first, a tech-based city with a massive traffic/parking problem. It can take over an hour to get across this small 7-mile wide city. If only self-driving cars are on the road, then the vehicles can drive quickly and without stopping, and you could get across town in minutes. All of that is impossible if you let a single human driven car on the road. If you have a human driver on the road then we have to stick to the traffic patterns we are used to, the ones that a human driver can deal with. Get rid of that human driver, and we have these traffic patterns of interlaced cars not stopping and safely avoiding each other.

So at some inflection point, when most of the cars are self-driving anyways, and when that kind of automation is cheaply available (and easy to retrofit on older cars), then you will see SF (perhaps initially just specific zones or streets in SF) that are only open to self-driving cars. And it will grow to other cities once SF is transformed by the ability to quickly and cheaply travel from one end of the city to the other. After a short while, you won't need a car, and all of the street parking will disappear, and people will convert their garages into valuable storage space or extra bedrooms, and you'll be able to walk outside and instantly get a vehicle to take you anywhere in the city in minutes. Fatalaties from car accidents will drop to almost zero, and we will look back at the times that we allowed humans to drive around in these massive death machines as 'barbaric'.

Other parts of the world will see this example and it will spread. It will continue to spread until it becomes the standard, and the proposition of travelling in a human driven vehicle will be preposterous. You can claim you love driving your vehicle all you want, but eventually it just won't make any sense. When near free rides are faster and immediately available, the idea of paying many tens of thousands of dollars and paying insurance in order to own a massive metal machine that you rarely use is just nonsense. I have a vintage car that I rebuilt that I had planned on giving to my son to give to his son and so on, to keep in the family forever. That notion is as old as the horse and buggy. He is 3, and he will grow up perceiving "driving a car" the way we perceive blacksmiths and telegraphs. Side note, does anyone want to buy a 1957 Triumph?

When will this happen? We are in the midst of it now. The technology is at the point where it's just about to tip over, and then it takes a few years to go into reasonable production, and then it takes a few years to become cheap and ubiquitous. That seems so soon, doesn't it? If you look at the advances in AI from a linear perspective, then yes, it seems to soon. But AI isn't going to advance linearly, dear human.

What about the legislation? It's actually surprising that legislation currently allows any self-driving car testing at all, since usually legislation, like people, can only reasonably handle linear change. That's why so much of the legislation around technological advancements (like the legislation we've seen come and go for the "internet") are so ridiculous in the initial stages. Napster comes out and it's attacked on all legal fronts, because digital music being widely available, heaven forbid! Fortunately for the growth of self-driving cars there is some big money behind the technology, and big money has always managed to be 'convincing' to our legislation, so we're seeing allowances for self-driving vehicles on our roads already. Eventually the legislation will have no choice but to follow the advancement. Just imagine a goverment trying to stop the invention of electricity. It just won't happen. It a takes a totalitarian dictatorship to try to censor the internet, and even then the citizens of those countries are able to find workarounds.

This notion that we have a choice is silly. Any of us could 'choose' to live in a pre-industrial age, but our society does not. You're probably reading this article on a computer that relies on so many incredible technological innovations that weren't even normal in society mere decades back. We could pretend that mobile phones don't exist, but just about everyone is carrying one around. When cell phones were an early technology - many people claimed that mobile phones wouldn't become ubiquitous, because nobody wanted to be accessible all the time, right?

Wrong.

Progress moves forward. And you can ignore the small jumps on a linear scale and refuse to get on board. But when technology moves on an exponential scale, it empowers our lives on an exponential scale, and to not get on the bandwagon means to live in prehistoric times, and that is not human nature.

The only thing that could actually stop self-driving cars becoming our primary form of transportation would be if/when energy storage density becomes powerful enough that flying cars become feasible as our main form of transport. Either way the human "driver's license" is going to become extinct, tossed on the scrap pile of history along with records, answering machines and floppy disks.

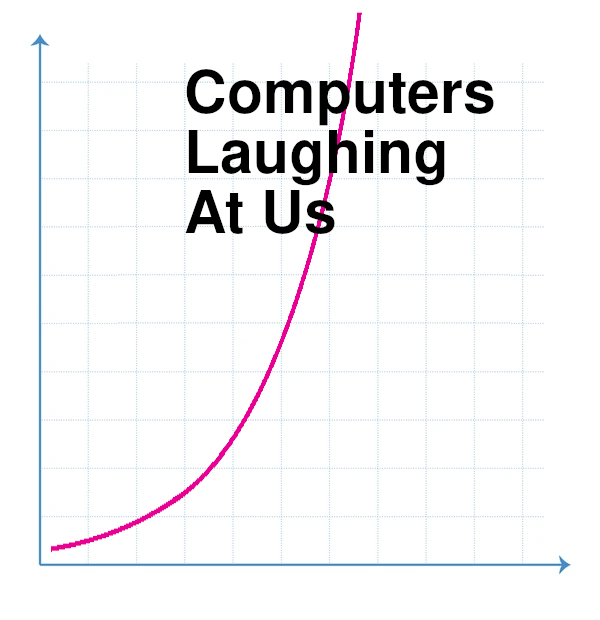

Self-driving cars are just a small, specific example of the future. What is really pushing all of this forward is that we have the hardware capacity to actually make neural networks that are big enough to solve useful problems. Humanity is watching this, wide-eyed, with the spectacular release of ChatGPT, which is now the fastest growing internet site of all time (to 100 million users). ChatGPT is really amazing and fun to experiment with. It repeatedly astounds with its capabilities. But what is really important about ChatGPT is what it demonstrates. We have been scaling up to larger and larger neural networks for many years now. And as the neural nets get bigger, they become more capable. But what is amazing with ChatGPT is that once we got to networks of ChatGPTs size, the capabilities and comprehension that it showed were completely unexpected. It is showing us that things are not just exploding right now in AI, but they are at the beginning of the 'explosions'. The part of an exponential chart just as it starts shooting massively into the sky. We can use AI now to help us program. To write essays. To solve problems. It can answer questions that we never taught it to answer, and that is fundamentally a game changer.

But still, as humans, we have trouble seeing what this future will bring. We are impressed with ChatGPT and try to imagine our 'future' with ChatGPT, or with something that is twice as good as ChatGPT, or maybe three times. That's our linear brains at work again. Because what we'll actually have will be hundreds of times more powerful. And then thousands. Unthinkably millions. What does it even mean to have something that is millions of times more powerful than ChatGPT? We can't even comprehend that, but we will have it soon. That growth will effectively be infinite from our perspective.

What does this mean beyond having a fun AI bot to talk to?

The industrial revolution was when we started creating machines that could replace muscles. Before the industrial revolution, if you wanted to dig a ditch, you had to get a bunch of people with a bunch of shovels, and muscles would move all that dirt. All of that can be replaced with one person with a bull dozer. Importantly, though, we still needed some menial (muscle) labor, because it was hard to automate even fairly simple tasks. Stocking shelves still required a human to look at products and figure out where they go, even though it's mostly a menial job.

Most people at this point can see that AI mixed with the industrial revolution (which is essentially what "robots" are) will be replacing basic menial labor. We're already seeing that.

But to think it would stop there is foolish linear thinking again.

The industrial revolution created machines that are replacing what our muscles can do. The AI revolution is creating machines that are replacing what our brains can do.

Not just simpler tasks, like stocking shelves or driving a car. AI today is already capable of researching a topic and write an essay about it. While we are incredible, magical, living creatures, it does turn out that our intelligence is not as special as we might have thought. It turns out our intelligence can be created by machines, and the level of intelligence it can create is growing effectively unbounded. Let's wrap our heads around that. It's difficult, because, as we've seen, the "non-artifical intelligence" in our brains does a pretty terrible job of comprehending exponential growth. Our brains are bounded. The average human brain gets perhaps slightly more intelligent with each generation. Slightly. So what happens when it gets compared against something that grows exponential? We may be winning the war against ChatGPT today, but it's only a matter of time before the smartest person in the world won't stand a chance against the basic capabilities of AI.

I tend to think of our mental labor as being in three categories. Basic menial control (like stocking shelves), and then non-inventive and inventive. By "inventive" I am talking about creating something entirely new that has not been seen before, and is not just some simple variation/combination of prior invention. The vast majority of our work, of even our skilled work is non-inventive at it's core even if it's new ground. Most people working in law, for example, are working on cases, researching, even writing arguments or judging them. None of this is entirely new. A miniscule percentage of them are actually creating new legislation, and even then, so much of it is just variations on past legislation. Do you think it's ridiculous to consider AI someday replacing our highly-skilled, massively educated legal workforce? ChatGPT v4 took the bar exam, and didn't just pass, it scored in the top 10%. And it's just a mere toy.

I'm not saying AI is incapable of creating completely new, inventive content, we are already seeing some of that, but it is certainly a more difficult task for it. For now, at least. But there is plenty of incredibly skilled labor, incredibly mentally difficult labor, and AI is going to replace that. As a controversial example, consider mental/emotional therapy. This seems innately human, innately non-replaceable, but we will absolutely see our therapy workforce completely replaced by machines. That sounds horrifying at first, but consider a therapist who never makes any mistakes, is capable of creating the same kind of connection as a regular therapist, has perfect knowledge of the state of psychological care, and never takes advantage of you, and is available 24 hours a day and is almost free to use. If that existed, people would use it in droves. But how could an AI possibly understand psychology? How could it create human connection when it isn't even human? The first answer is not difficult. AI can consume and learn all of the content that a skilled therapist would learn in school, in much less time, and then be infinitely duplicated. AI can learn what the right things are to say to both connect to the patient and inspire change. Being able to mimic us is one of AI's simpler tasks.

Will people want to talk to a robot? They won't have to experience it that way. So much of therapy these days is done online. We are ridiculously close to having AI that can generate a video image and audio of speech that will look and act exactly like a human. We're just a few linear steps away from that right now.

And will it create human connection? If you talk to an image of a human that is saying all the same things as a human would who you are connecting with, then we will feel that connection. The fact that it's one-sided does not keep it from happening. You can look at all the songs about unrequited love to see how able humans are at one-sided connection. Fortunately, this connection can be made to be helpful and healing.

Is this starting to sound terrifying? It should - because we are talking about a massive change and handing over great power. But to say that it is terrifying so therefore we won't choose it is the same naivety that thinks we won't choose cell phones. We will. We just need to make sure that it is built safely. That it is non-malicious and is controlled by safe organizations that we trust. That's a big ask, but that is the ask we need to focus on, instead of simply clamoring in terror that it cannot happen because it's scary.

Relationships with AI will become commonplace. In our lifetimes this will not be constrained to a video conference. AI is also going to massively improve our production so we'll see that the types of things we can build will skyrocket. We will see human-like AI that we can talk to, interact with, and have relationships with. I think it's safe to say that a big economic driving force in this development will be the sex industry, just as it is with most technology, and just as it is one of the biggest driving forces in our lives. This leads us to an interesting question of what we will do when we are able to make these perfect, human-like companions that can listen to us whenever we need, care for our emotions, assist us, show us affection. And that is 'show' as in demonstrate and mimic, because it seems likely, at least so far, that AI will not be capable of emotions (at least not yet), but it certainly will be able to show emotions.

And this is where many people think AI will falter, though history has shown us that they are wrong, whether they like it or not. To think that humans will knowingly choose to ignore the option of these idealized companions, these unfailing friends, these perfect lovers, simply because the emotion is one-sided, this is to also pretend that we don't cry at movies, or laugh when we read books, or feel love when we listen to a song. The movie doesn't feel anything about you, and those characters aren't real. But it creates a real and powerful emotion that we don't question. We celebrate these one-sided emotional experiences even more the more powerful they are. We will feel those emotions from our 'companions'. And that is one of the big questions we will have to reckon. What will be the point of having actual human friends when we can fulfill our need for companionship with an idealized partner? Who will have time for the 'real' companionship of a human, with all of its failings, all of its difficulties, when we can have perfect companionship? That leads us to the hard to fathom prospect of a future where we don't have (or need) human companionship anymore. To deny this simply because it sounds so alien or terrifying is to misunderstand what happens in exponential change.

There is another massive question that is created by all of this that society will need to face.

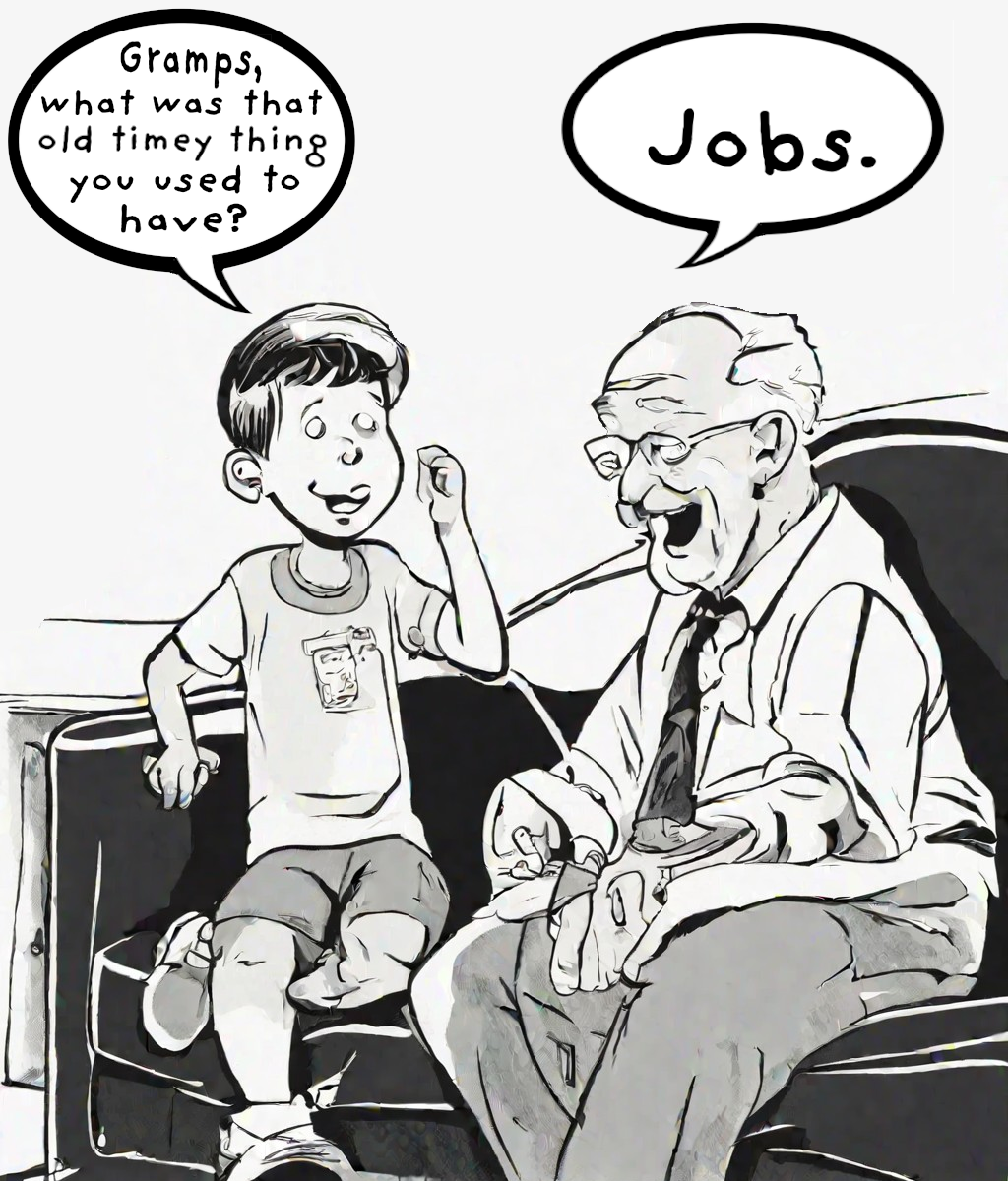

As AI grows, its capabilities of replacing mental labor grows.

In a really simplistic sense, we can think of this as the potential 'IQ' of AI. As the general IQ of AI gets higher, the types of mental tasks it can replace in humans grows. At first robots will replace more and more menial laborers, but soon it will start to replace mental labor. Teachers, attorneys, doctors, therapists, journalists, and so forth, eventually will all be replaced with AI that can do it better, faster, cheaper, more accurately. You can choose to send your kids to a human-teacher school for a while, I suppose, but are people going to keep doing that when the kids who go to the school that is taught by tireless, perfected AI teachers are producing graduating classes that are massively more educated and informed? Now realize that it's a one-to-one teacher to student ratio, and that teacher is available whenever your student needs it. There's no more homework, there's just working on problems with their unfailing, patient, personalized teacher until understanding is achieved, then moving forward.

When AI becomes smarter than humans... And I say when, not if, it is important to recognize that this will be a gradient. There is an entire range of mental labor, from solving basic problems like planning shipping routes, which AI is already doing, to teaching our students, to writing code, to governing our populace. Eventually AI will be able to do all of these things better than us. And as that happens, we will have to figure out what we want life to look like.

But humans don't deal with change quickly, and this is where we need to start preparing. Imagine the inflection point where AI is effectively smarter than half of the population. That means that half of the population could still produce value that cannot be accomplished by AI, but the other half of the population is incapable of producing any meaningful work to society. They are not worthless, they are still people, but anything they would be able to do can be done cheaper and better by AI. So now their ability to contribute to society has been taken away.

Traditionally when industries dissolve in a capitalist society because of technology, while it is very disruptive to the families in those technologies, often times the tragic cost they are forced to pay is to switch to an entirely new industry, to train for different work. But what happens when there is simply no work left that you are capable to train for, because AI can produce everything you can produce better, faster, cheaper?

It's worth stating that my personal bias for market control happens to be on the side of capitalism, I enjoy living in the reward system of a free market. But when this shift starts to occur, if we as a society hold on to capitalism as a governing system for our market, then we will be doomed to have many people lost, many people without the ability to care for themselves. Can they just take service jobs to serve those who do have the abilities and means? No, because those service jobs will be done by AI powered robots. There will be nothing left for them. How are they going to be treated by the people who are fortunate enough to be replaced? Will we grow enough as humans to take care of each other through this transition? Human history does not perhaps give us much hope in this department, so maybe we need to start considering how we can tackle these issues now, before they take us completely by surprise. Our only other hope is that this gradient of change is so fast that AI overtakes all of society quickly enough that we can skip this ugly middle portion. I have to remind myself as I write this article that my linear brain is probably overestimating how long we will spend it this transition, so perhaps we will luck out and it will happen so fast that we will all get to be obsolete together.

One of my biggest hopes is that we are wise enough, as a people, to know when capitalism no longer works, because we become able to simply live without needing to produce. At that time we will be able (or should I say need?) to figure out what our purpose is in life, without work needing to consume so much of our time. That presents great opportunity for the human race, and great possibility for tragedy. It depends on whether we are smart enough to govern ourselves through that shift.

But wait.. talking about being smart enough to do something? What about when AI is smarter than humans and can be more intelligent about governing ourselves?

This is where visions of the Terminator and SkyNet start to creep in.

And I understand those fears. They are real. They need to be addressed.

But to address them like it was addressed in movies like the Terminator are a mistake. It's too late at that point. And to just pretend we will not choose these kind of technological steps just because they are scary, just because they have risks - this is to pretend that we are going to still live in the dark ages because electricity is scary, or computers are scary. Technology is coming. We can't change that. We can only think about how we want to steer it, and we have to think fast, because technology is even faster.

Good luck, future. My kiddo needs you to be nice to humans.

About The Author

David Stellar is a computer geek and a professional dance teacher who loves technology futures even when it may be problematic. He lives in San Francisco with his wife and their three year old son as well as their cat and dog. While he does work at a tech company that is heavily involved with the AI future, it's worth pointing out that he works in the hardware department and not directly with AI. Before discounting his opinions because he is not professionally working in AI, you should know that David's son thinks he is a genius (though his cat is not convinced).Note 1: Do you only think a fraction of people will lose their jobs? Keep in mind that the recession in 2008 came along with only a 10% unemployment rate, and that seemed to be a disaster. Only a few percentage points are needed to up-end society Note 2: The argument about new industries coming up as old industries are replaced does not make sense in this context. It's like horses watching cars appear and saying "I wonder what industry we'll go into next?"